ISR Didn't Work for Our Multi-Tenant Next.js App: Here's What We Built Instead

How a silent Next.js 15 limitation pushed us into a self-rendering Azure Blob architecture.

The setup

We run a multi-tenant platform. One codebase, one database, hundreds of customers. Each customer gets a subdomain — acme.ourapp.com, widgets.ourapp.com, and so on. Each subdomain shows that customer's branding, their directory of team members, their content.

The interactive parts worked beautifully. Users could log in, book appointments, make transactions — everything our dynamic app does well.

But there was a hole.

The public pages were invisible to search. Every customer's site served the same generic metadata. No structured data. No per-tenant sitemaps. Google couldn't tell one customer from another, because as far as Google could see, they were all the same page.

We needed to fix this. Fast, without rewriting the app, and without adding per-customer operational overhead. The goal: every new customer we onboarded should be SEO-ready the moment they signed up.

The obvious path

The textbook Next.js answer is Incremental Static Regeneration — ISR. You tag your pages with a revalidation interval. Next.js caches the rendered HTML. When the interval expires, it re-renders in the background. For bonus points, you can plug in your own cache storage. We chose Azure Blob Storage, since we already had an Azure subscription.

A day of work, mostly. Build the cache handler. Wire it into the framework config. Deploy.

The handler loaded correctly. Our startup logs even said so:

Using custom cache handler AzureBlobCacheHandler

Green checkmark. Ship it.

Then the pages still rendered fresh every time.

Not once did the handler's get or set function fire. No cached HTML ever made it into blob storage. We watched the logs, we added extra debug statements, we stared at the code for hours. The handler was active. The framework was acknowledging it. And yet, nothing was actually being cached.

No error. No warning. No diagnostic. Just a mismatch between what the framework was reporting and what was actually happening.

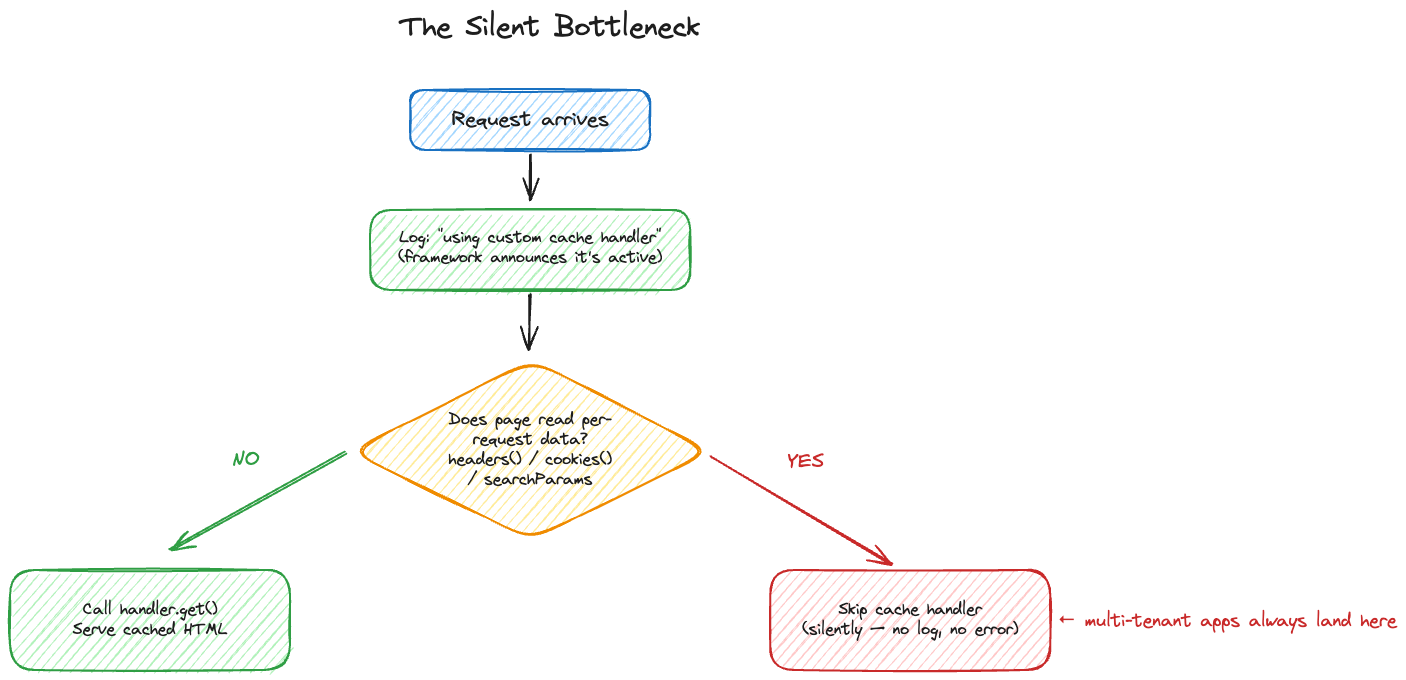

Finding the bottleneck

Docs, GitHub issues, Stack Overflow — nothing. We stripped the test case down to a page with a single word, swapped Azure credentials, swapped containers. The handler always loaded, and its methods never fired. External debugging exhausted, we went into the Next.js 15 source itself. That's where the bottleneck became visible.

Buried in the caching logic, there's a check the framework runs before calling the cache handler: does this page read any per-request context? If yes, the page is classified as "fully dynamic", and dynamic pages skip the cache handler entirely. In the Next.js 15 App Router, the specific triggers are:

headers()— reading any request headercookies()— reading or setting cookiessearchParamsreceived as a page prop — reading URL query parametersdraftMode()and request-scoped fetches flagged as dynamic

Touching any one of these flips the page into dynamic mode. The cache handler still loads. The framework still announces "using custom cache handler" at startup. But the get and set methods you registered are never actually called at request time. No warning, no log, no hint that your caching layer is sitting idle.

This is a defensible design decision by the Next.js team. A page that reads per-request context usually shouldn't be cached with a global cache key; that's how one user's cookies get served to another user. For most applications, the rule prevents a whole class of security bugs.

But for multi-tenant apps that route by subdomain, reading the Host header is not optional. It's the single input that tells the code which customer's page to render. One app, many subdomains, and headers() is how the code knows which subdomain is asking. Remove it and every customer's page becomes identical, which defeats the entire architecture.

So the rule that made sense for most Next.js applications actively blocked apps using subdomain-based multi-tenancy. ISR was unreachable. The cache handler we'd built was, by design, never going to run. No configuration flag would change it.

This isn't a niche gripe, either. The same wall shows up in open Next.js discussions: #45457 — Multi Tenant Website + ISR + Dynamic Routes is the exact scenario with no resolution, and #82571 — Next.js and Vary acknowledges more broadly that caching variants like this is currently unsupported by the framework. It's a real, unresolved limitation, not a misconfiguration.

That was the bottleneck. Once we saw it clearly, the next question became what to build instead.

The dead ends

- Remove the Host header dependency. Every public page needs to know which customer is being served. There's nothing to separate.

- Move tenant logic to the client. Makes caching possible, but crawlers get a generic shell. SEO back to zero.

- Switch hosting providers. Not practical, and lock-in forever.

- Put a CDN in front. Works, but a separate product, separate billing, new failure modes, and another layer of invalidation to maintain.

None of these fit. We needed to cache tenant-specific pages without removing the Host header, without going client-side, without switching hosting, and without adopting a CDN.

The pattern

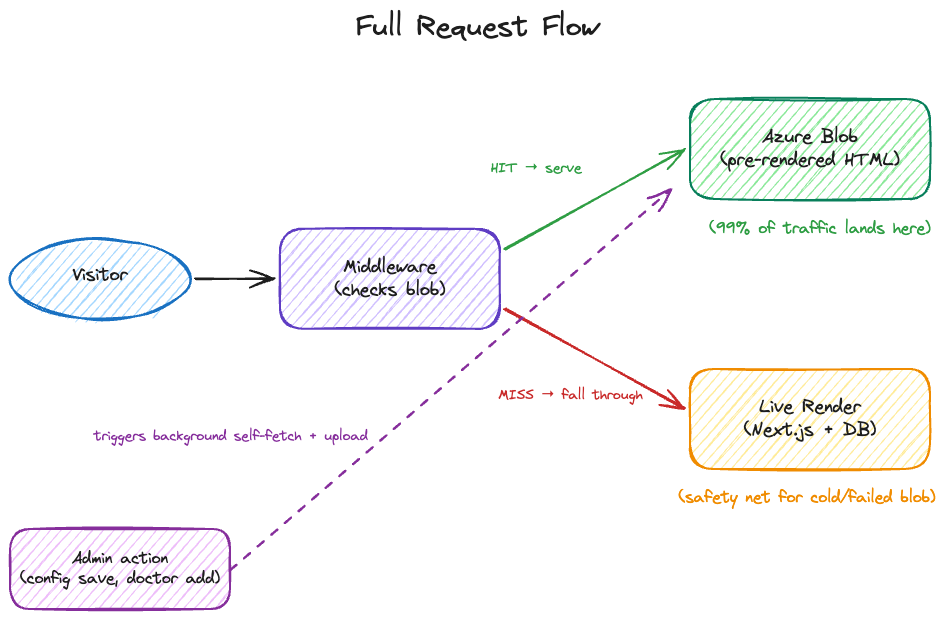

The framework was rendering pages perfectly. That part worked. The problem was entirely on the caching side. So we stopped trying to convince the framework to cache and caught the output ourselves.

When something changes — an admin updates branding, a team member is added, a location is edited — a background process kicks off. It makes HTTP requests to our own application, walking through each public page for that customer, with a special header that tells the system "this is a self-fetch, don't try to serve from cache" to prevent infinite loops.

The response to each fetch is the fully rendered HTML, identical to what any real visitor would receive. Same components, same styling, same metadata, same structured data.

We take that HTML and upload it to Azure Blob Storage's static website hosting. More on why that choice below. Each customer's pages become files in a folder named after their subdomain, scoped by environment so local, staging, and production each have their own space.

Now, when a real visitor arrives, our application's middleware intercepts the request at the edge before any page rendering happens. It checks whether we have a pre-rendered copy of the requested page in blob storage. If we do, it streams that HTML directly back to the visitor. If we don't — new customer, never rendered, Azure temporarily unreachable — it falls through to the framework's live rendering as a safety net.

The customer's website loads in about 30 milliseconds. The framework never sees most of the traffic.

There's no parallel renderer, no duplicated component logic, no templating engine. When a developer changes a button's color in React tomorrow, the next render cycle captures it automatically, because we're literally capturing the app's real output. The cached layer is purely additive: remove it and the app still works.

Why the substrate matters more than the pattern

The self-fetch-and-store pattern is old. Gatsby's DSG does it. Vercel's ISR does it internally. Prerender.io built a business on it. Prerendering isn't the interesting part of this post.

The interesting part is where the pre-rendered HTML lives.

Most prerender systems bundle the storage layer into their opinion. Gatsby puts the output in your build artifact. Vercel puts it in their managed blob. Prerender.io puts it in their managed cache. Each choice comes with baggage: build-time coupling, vendor lock-in, per-read compute cost, proprietary invalidation APIs.

Azure Blob Storage's static website hosting fits the shape of what we needed, even though Microsoft doesn't market it that way:

- Free HTTPS endpoint out of the box. No cert management, no CDN contract, no edge-compute fees. Microsoft operates the public web server; we just upload files.

- Zero app compute on reads. Visitors hit Azure directly. Our servers don't see read traffic at all. Database queries for public pages go to zero.

- Invalidation is just overwrite. No cache-busting headers, no purge APIs, no TTL expiry. Upload a new

index.html, new content goes live immediately. - Filesystem-as-routing. Folders namespace by customer, by environment, by anything we want. No schema, no query planner, no connection pool.

- Already paid for. No new vendor relationship, no new line item on the cloud bill.

We briefly considered a Postgres cache table, but that's back to paying per-read compute and writing invalidation logic. We considered Redis: same story, plus a new dependency. Static website hosting dodged both.

The point isn't Azure specifically. S3 + CloudFront, Cloudflare R2 with custom domains, GCS: any of them could work. The point is that static website hosting products, designed for the deploy-once-serve-forever workflow, happen to be excellent substrates for a write-on-mutation, read-on-visit cache. They're the cheapest and simplest prerender cache you can build, and the one most developers never consider because it's marketed for the wrong use case.

The numbers

Before this system, every visit to every customer's public page triggered a database query and a full server render. Median server response time sat around 300 milliseconds. SEO metadata was generic. Google couldn't index customers individually.

After: median server response time for the same pages is around 30 milliseconds, measured from the same region. Database queries per visit dropped to zero. Each customer has unique metadata and structured data that qualifies for rich search results, a per-tenant sitemap that auto-updates when team members change. New customers are search-ready the moment an admin saves their first configuration.

The cost model flipped. Database and server compute used to scale with visitor traffic. Now they scale with change frequency: how often admins update their content. For public pages that change rarely and are visited often, this is exactly the right tradeoff.

The takeaway

Next.js 15's ISR is gated on a dynamic-rendering check that quietly excludes subdomain-based multi-tenancy. No flag, no workaround — structurally unreachable. So we stopped arguing with the framework, kept what it was good at (rendering), and moved caching outside entirely.

The part worth stealing isn't the prerendering — prerendering is everywhere. It's the substrate choice. If you're serving pre-rendered HTML per tenant without owning a CDN, take a serious look at whatever static-website-hosting primitive your cloud provider offers. It's the cheapest cache you'll never think of.

Find the bottleneck clearly. Stop trying to argue past it. Build around it.

Experimented at HealthPilot — copilot for your health.